Red Palm Weevil, a little creature able to create major impact

Date palms and canary palms have been exploited since ancient times in commerce, nutrition, landscape enhancement, and other activities in the Mediterranean, Middle East, and North Africa regions. Currently, it is estimated that they cover more than 1.3 million hectares. Unfortunately, the Red Palm Weevil (RPW), a lethal pest that threatens these palms, has spread worldwide. This could unfold a future crisis since palms represent high-valued assets in the above mentioned regions' socio-economic development.

As palm trees are widely spread over large territories globally, it is hardly possible to detect each palm tree and visually check on symptoms caused by the RPW. But what if we used earth observation data and remote sensing technology to detect and scan palm trees? In the PalmWatch project, we are currently evaluating the potential of, for example, using drone-based image processing to detect this destructive pest and set up an automated processing chain and visualization platform to indicate infestation probabilities.

Pictures of weevils captured in the field while closely examining infested trees

First things first! Detecting palm trees timely and efficiently

As the RPW can affect palms tremendously, it is vital to detect the infestation in an early stage. With few externally-visible signs, and palms largely distributed in space, remote sensing could provide an efficient and accurate way to monitor the health of palms at a large scale. But before we can set up such a processing chain and visualization platform, three major tasks have to be fulfilled:

- Large scale palm tree mapping (including date and canary palms)

- Detection of RPW affected palms

- Classification of the RPW infestation level

June 2019, we started step 1 by creating a palm detection model using deep learning. The fact is that timely detection is crucial for the survival of the palm trees but information on their number and distribution is scarce. Thus, a close observation against the pest is not currently possible. So, we started by training a palm detector based on deep learning to geolocate individual palm trees on aerial RGB imagery over the Alicante province's extent.

Aerial images and deep learning for large scale mapping

Let me explain how we developed this palm detector and palm inventory. First, we obtained several aerial images over the Spanish region of the Alicante province, covering more than five thousand square kilometers. We selected this region as it is an iconic place for date and canary palms. We started by manually annotating the crowns of date and canary palms in some of those images. This allowed us to train our deep-learning object detector so that it can classify and locate palm crowns automatically. This detector is based on convolutional neural networks (CNN), which have been promoted in the last few years in the remote sensing domain since they are designed to process data in the form of multiple arrays as multi-band remote sensing data. Also, as CNN directly learn from imagery, the expert knowledge to extract features necessary in conventional machine learning methods is eliminated. And second, we applied the detector over the complete imagery dataset of Alicante to finally obtain an individual palm tree inventory of Phoenix palms (date and canary palms), which can be accessed on this Palmwatch Earth Engine viewer.

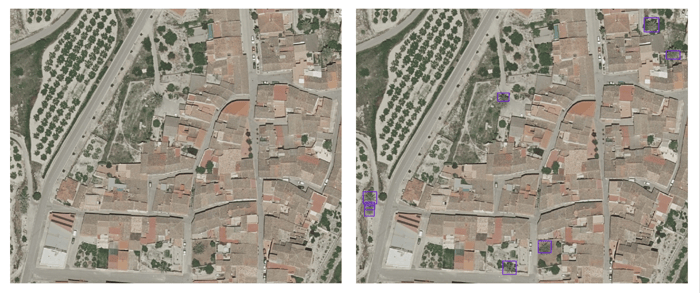

Examples of palm crown detection - Original data source: Ortofoto RGB 2018 CC BY 4.0

Examples of palm crown detection - Original data source: Ortofoto RGB 2018 CC BY 4.0

© Institut Cartogràfic Valencià, Generalitat; OrtoPNOA 2018 CC BY 4.0 www.scne.es.

Experimental results let us state that the trained palm detection model provides a fast and straightforward method to geolocate isolated and densely planted palms from aerial high-resolution RGB images. Moreover, it allows conducting the palm tree inventory at a wide scale and reduced cost compared to tree inventory employing field-based measurements. The detector's performance showed a mapping capacity to geolocate palms in different scenes (e.g., nurseries, parks, gardens, plus a wide range of natural and semi-natural habitats), achieving 86% precision. In total, 1,505,605 palms were mapped over an area of 5816 km2.

This inventory constitutes a baseline that will allow us to take on the next step of the PalmWatch project, which is the detection of infested palms. More details on the work conducted in this step can be consulted at our peer-reviewed paper. Here below two examples of palm crown detections.

Detection of affected palms and classification of infestation level

But our ultimate goal is still to detect RPW affected palms and classify their infestation level. Currently, regular field and flight campaigns are being executed in Elche town, located in the Alicante province. The aim is to capture detailed aerial imagery and to monitor the health state of date and canary palm trees. With a team of researchers and RPW experts, palm trees are inspected to find symptoms related to RPW infestation.

Pictures of our field campaign in Alicante, Spain in July 2019 where we inspected date and canary palms

Pictures of our field campaign in Alicante, Spain in July 2019 where we inspected date and canary palms

As a next step, the spectral and thermal information we expect to acquire from the remote images will be analyzed to develop an RPW detection algorithm. The palm tree maps created in the first step will already give an insight into the possible spread of the RPW. If the results of the spectral and thermal analyses are satisfying, then new flight campaigns can be advised in specific regions where the pest pressure is high. As such, all localized palms will be classified into different health classes (healthy, infested, and dead) and visualized in an RPW probability map. This will make the outcome of the project available for non-remote sensing experts, who will benefit the most from such maps.

Follow us via our remote sensing blog or via Twitter to stay informed about our next steps and outcome in the PalmWatch project. The first steps are taken in our weevil battle, but we hope to share some additional results soon so that you can keep on dreaming of beautiful beaches with healthy palm trees!

Related scientific paper:

Culman M, Delalieux S., Van Tricht K. (2020) - Individual Palm Tree Detection Using Deep Learning on RGB Imagery to Support Tree Inventory - Remote Sens. 2020, 12(21), 3476; https://doi.org/10.3390/rs12213476

AGRICULTURE

AGRICULTURE

/Blog_CORSA_1200x650.png)

/Blog_WorldCereal_1200x650.png)

/lewis-latham-0huRqQjz81A-unsplash.jpg)