From artificial neurons to automatic detection of small waterways

Deep learning is an amazing technology. The leap forward in object detection accuracy, compared to the traditional methods that relied on manual engineering of features, was made possible thanks to powerful parallel processing computing power in the form of GPUs, and the availability of large amounts of labelled data. Humans still need to define the deep learning network architecture, but feature kernels are learned automatically from the large amounts of data during the training stage of the network.We of course do not only use deep learning for the localization of ditches (an image segmentation task). In our continuous development of new and user-friendly image processing tools and services, we apply deep learning models for:

- classification of images from aerial photography – deciding if an object we are looking for is present or not

- object localization – determining the position of the object in the image

- semantic segmentation – segmenting the image into a discrete number of classes

- instance segmentation – distinguishing between different instances of the same object class

Save time and costs

For the task at hand, the localization of ditches based on a digital elevation model (DEM), we actually draw inspiration from the medical community and apply a deep learning model that was originally developed for the challenge of segmentation of neuronal structures, a challenge started in 2012 at the IEEE International Symposium on Biomedical Imaging (ISBI).

The Flanders administration keeps track of all unnavigable waterways in Flanders, collecting all information in a digital atlas. Mapping these small waterways, using measurements in the field, is very time-consuming. This projects wants to investigate the possibility of using a detailed digital elevation model (DEM) of Flanders for the automatic detection of small waterways and ditches. This approach could represent significant cost and time savings, and at the same time it offers the possibility to provide additional information on the width and depth of the waterway, next to the location.

|

|

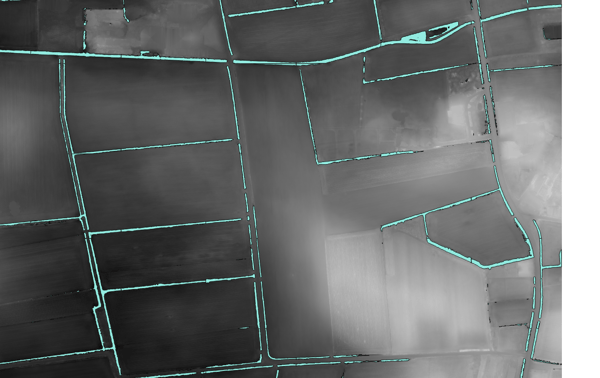

Neural net input = digital elevation model (DEM) – neural net output = waterway/ditch detections on top of the DEM

LiDAR dataset for Flanders

The digital elevation model that we use is derived from the LiDAR dataset produced by the Flemish Information Agency (Informatie Vlaanderen – IV). It was acquired between February 2013 and March 2015, and provides height information at a resolution of 16 points per m². Lidar ( LIght Detection And Ranging) uses the reflection of pulsed laser light to measure the distance to objects. The main advantage of LiDAR for height measurements, is that some of the pulses penetrate vegetation: whereas some LASER pulses are reflected by branches and leaves of the trees, other pulses will reach the ground surface (notably in winter time when leaves have fallen). The result is a point cloud with multiple returns per location, providing information on top of canopy as well as ground surface height. When the goal is to identify ditches for water management, the LiDAR points of last return (pulses that reflect off of the ground are the last to be reflected) provide us with a detailed height map of the ground surface.

Training our model

Supervised learning requires a lot of training data. This means we’ll have to manually annotate pixels that belong to a ditch and feed that information to the neural network while training. Using standard GIS applications, we can manually define polygons that closely match the known ditches, thus creating a ditch ‘mask’ that serves as the ground truth during training of the network. But that doesn’t give us enough samples. We then turn to data augmentation techniques, e.g. rotation, random cropping, mirroring, and elastic deformations to create thousands of training images from a small set of ground truth samples.

In order to enhance the contrast in the input image, contrast limited Adaptive histogram equalization (CLAHE) is used to make it easier for the network to learn. The slope of the DEM also directly provides visual clues as to where a ditch is present, and so both the DEM and the derived slope image are fed as input to the network. This allows the network to recognize ditches in the input image, and produces a map as output with the same dimensions as the input image, labelling the pixels that belong to a ditch. Finally, the results are converted into vectors, for easy integration into a GIS.

CLAHE equalization to enhance local contrasts in the DEM

The extensive potential of deep learning

Deep learning data allows us to extract information out of massive amounts of remote sensing data efficiently. Think e.g. of the automatic delineation of farmland fields, allowing researchers to group satellite information per field, without having to manually annotate field borders for thousands of fields.

Another example would be the automatic and reliable updating of building registers in a country, or mapping the expansion of invasive flora. Precision agriculture can also benefit from deep learning, e.g. yield prediction in orchards, disease detection in croplands. A lot of topics all currently under investigation, so stay tuned for more news.

Example of elastic deformation as a data augmentation technique

/Blog_CORSA_1200x650.png)

/lewis-latham-0huRqQjz81A-unsplash.jpg)